Keyword Research Tools Can’t Accurately Predict Search Volume. Here’s Why

Estimating how much traffic you’ll get from writing a blog post is the primary reason to do keyword research.

Keyword research tools claim they know exactly how much search volume every search query gets. Which makes it quite tempting to pony up the $100/m for some of these tools. If the tools could predict the search volume they’d be well worth the price tag.

The problem is these tools can’t predict search volume with any accuracy. It’s a blogging myth. And it’s essential to understand why they can’t so that you can target better blog topics.

There Are Only Three Ways To Get Google Search Volume Data

Google doesn’t release its internal traffic data for the world to see. Keyword research tools can only guess how much traffic a query gets by using one of the following 3 methods.

- Winning the keyword phrase on their own blog and using the actual traffic data.

- Using the Google Keyword Planner Tool for traffic data.

- Using Clickstream data. (Capturing a sampling of search queries and using that to project traffic volume.)

Each of these methods has obvious flaws. Understanding those flaws will clear up why keyword research tools can’t accurately predict search volumes. Let’s get into them.

The Problem With Using Actual Data

Winning a keyword helps keyword research tools to test and validate their traffic predictions.

The problem is that it’s hard to rank #1 for any keyword. Let alone billions of them. Keyword research tools simply don’t have the resources to rank #1 for a statistically significant number of keywords. This method really isn’t valuable for making predictions across the entire web although it could be used as a test for how good their predictions actually are.

The Problem With Using The Google Keyword Planner

1) Keywords in Google Keyword Planner are Broad Match

Enter a broad match, a phrase match, and an exact match keyword into the Google Keyword Planner. You’ll only get traffic data back for the broad match.

The volume data Google returns doesn’t represent the number of people that typed “keyword research” into Google. Here’s Google’s example for describing how broad matches work.

If you don’t see the issue here, let me clarify. Google is combining the search traffic estimates for “low-calorie recipes,” “Mediterranean diet books,” and more into “low-carb diet plan.”

We have no way to tell how many keywords are getting stuffed into the volume estimates from the Google Keyword Planner. This is not good data for bloggers.

2) Google Keyword Planner is for Advertisers, Not Bloggers

Relevance is one of Google’s most significant ranking factors for Blog posts.

This means you can’t write a blog post and expect to rank for both “low-calorie recipes” and “Mediterranean diet books.” As a new blogger, you’d have to target one or the other to have any chance to compete for either phrase.

But for advertisers? All these phrases may be relevant enough to sell your diet product.

Google uses relevance to help determine ad placements as well. But, for ad placements, Google cares as much or more about how much you’re paying them than it does about relevance. If you bid high enough, it’s OK if your advertisement is less relevant than the competition. Google will simply charge you more money to show your ad.

Relevance doesn’t matter as much for ads as it does for blog posts. This is why using broad match data makes sense for advertisers but not for bloggers.

3) Keyword Planner Data Isn’t Trustworthy

Moz has a great writeup about some of the logical inconsistencies within Google Keyword Planner.

One of which is that Google uses rounded averages of keyword data. Meaning, a keyword like “Thanksgiving” is being shown to get consistent traffic every single month. But, in reality, there is a massive spike around Thanksgiving and very little traffic the rest of the year.

But, that problem gets worse when you combine it with traffic buckets. What’s a traffic bucket? These are the values that Google rounds to when calculating rounded averages.

If a keyword got 223,437 searches in a month, Google rounds that number to the nearest traffic bucket. So if the nearest traffic bucket was 201,000 visitors, Google rounds to that number. Then it uses the traffic bucket’s number for its rounded average calculation.

The result is that the reported search volumes are all off by a decent percentage.

4) Long-Tail Phrases Round to 0

70% of search queries are long-tail search phrases. However, if the search volume isn’t high enough to hit a traffic bucket, its traffic estimate gets rounded down to 0.

This is not helpful for bloggers. The vast majority of your traffic as a new blogger will be coming from these long-tail search phrases. Keyword research tools will tell you that all these phrases get roughly 0 traffic.

In June 2019, I wrote a blog post about how you’re never too old to start a business. In August alone it had 30 impressions for “too old to start a business” while on PAGE 4. Clearly this phrase is Google’d much more than is being reported.

If we head over to Ubersuggest, it tells us only 10 people Google this phrase per month. All keyword research tools will underestimate long-tail search phrases exactly like this. And there are billions of phrases like this worth targeting.

5) Google Keyword Planner Can’t Handle 10+ Word Phrases

The Google Keyword Planner flat out refuses to give traffic estimates for any 10+ word phrase.

For the vast majority of keywords, that’s not a significant problem. But, keyword research tools use the Google Keyword Planner for search volume estimates. Those keyword tools are now forced to tell you that any phrase over 9 words gets 0 traffic.

That’s obviously false. I can’t say for sure how many 10+ word phrases get traffic, but I can guarantee you it’s more than 0.

6) Search Volume isn’t Always a Great Predictor of Search Traffic

These days Google will often put up the answer to your query instead of the typical SERP. In these cases, very few people will actually click through to a website as Google has already answered their question.

These are referred to as “zero-click searches,” and an astounding 49% of Google queries are 0-click. You obviously wouldn’t want to write a blog post targeting these types of queries because they won’t bring you much traffic.

But, by using broad match data, how would you differentiate “Donald Trump age” from the keyword “Donald Trump?” You can’t.

Neither can keyword research tools. The data gets conflated together, then rounded off multiple times. This makes the data you get from the Google Keyword Planner extremely imprecise.

The Problem With Using Clickstream Data

So what exactly is clickstream data again?

Sometimes people install browser plugins or applications on their computer and don’t read the terms of service very well. Those plugins then track your entire browsing history, anonymize the data, and then sell that data to 3rd parties. Those 3rd parties (like JumpShot, which has since gone bankrupt), will sell the data off to keyword research companies. And keyword research companies use the data to help predict search volumes in their software tools.

The general idea behind how this works goes like this. If you capture 1% of all searches across the Internet, all you have to do is multiply that number by 100. That gives you semi-realistic traffic estimates for all keywords. Right?

Yes and no. Let’s get into the obvious problems with this approach.

1) ClickStream Doesn’t Collect a Large Enough Sample Size

If you could capture 10% of all Internet searches and multiply the result by 10, this method might work surprisingly well.

But you can’t capture that type of market share. The only company with that amount of data is Google, with over a 90% market share. Not even Bing captures 10% of search results. I like to think that sketchy browser plugins don’t have a 10% market share on all Internet searches currently happening.

The less data you have to work with, the harder it gets to project your results. If you only capture 0.1% of searches, you now have to multiply search queries by 1,000.

This becomes a major problem when dealing with long-tail search phrases. If somebody Googles a long tail phrase one time and your dataset is this small, your choices are to say this query gets 1,000 searches per month or 0. Either way, your estimate will probably be very wrong.

2) ClickStream Data is Biased

ClickStream data isn’t random data. You would have to be the type of person that would install a browser plugin and click “Go ahead and steal my personal data” to get included in the dataset.

Age, gender, education level, hobbies, etc. They all impact what it is you’d be Googling. Is Clickstream data getting a good sampling of all these factors? Hell no, it’s biased towards whomever they can trick into installing browser plugins.

3) Clickstream Data is Desktop Only

Nearly 60% of web traffic is from mobile phones or tablets. As far as I know, it’s not easy to install browser plugins on most mobile devices as of 2025. Nor is it possible for an app from the apple app store to spy on your web browser as it would be on a PC.

Meaning the hypothetical maximum amount of searches a Clickstream company can capture is the 40% of Desktop traffic available. To reach a 10% market share they’d have to install sketchy software on 25% of all Desktop browsers. I like to think that clickstream companies aren’t successfully tracking 1 out of 4 people with a PC. That’s scary.

4) This Isn’t Like Polling Who You’d Vote For

If you’re polling for whether someone will choose the correct political party in the next Presidential election. There are only a few possible answers to that question. You don’t need a ton of data before you have statistically significant results within some type of margin of error.

But with web searches. What people are Googling is not only limitless, it’s also a moving target because it changes over time. Sometimes rapidly.

Worse than that, it’s actually quite challenging for computer software to recognize that “How many Ounces in a Gallon” and “Ounces to Gallons” is effectively the exact same query. Google has spent a lot of money and brainpower getting their algorithms to understand this. Clickstream and Keyword Research companies don’t have the capability.

Conclusion: How Does This Help You?

OK, keyword research tools can’t accurately predict search volumes. How does this help you? Here are three key takeaways that will help you with your Blogging journey.

1) High Volume Keyword Estimates Are Off But Still Worthwhile

For high volume keywords, keyword research tools will be off by 30% or potentially much more.

However, if a query only gets 17,000 searches as opposed to 30,000 searches per month or vice-versa, do you actually care? Does that change whether you’d target the keyword or not?

As a new blogger, I’d happily write a post that was bringing in 1,000+ visitors per month to my website. I care a lot more about whether I have a chance to compete for these high-volume keywords than I do about the exact amount of traffic they get. For most small bloggers looking at high volume keywords, these tools are close enough.

2) Long-Tail Volume Estimates Are Bad to Non-Existent

Over 70% of search queries are from long-tail keywords. Keyword research tools will claim many of these queries get 0 traffic.

This is where these tools miss the mark. For small bloggers, there are decent search volumes to be had that can’t be found using these tools.

This is particularly true when a blog post ranks for a large number of long-tail keywords. You stand to get a lot of traffic when you add up ALL of the long-tail phrases you qualify for. But, none of those phrases register as getting traffic in keyword research tools. So how do you find these keywords?

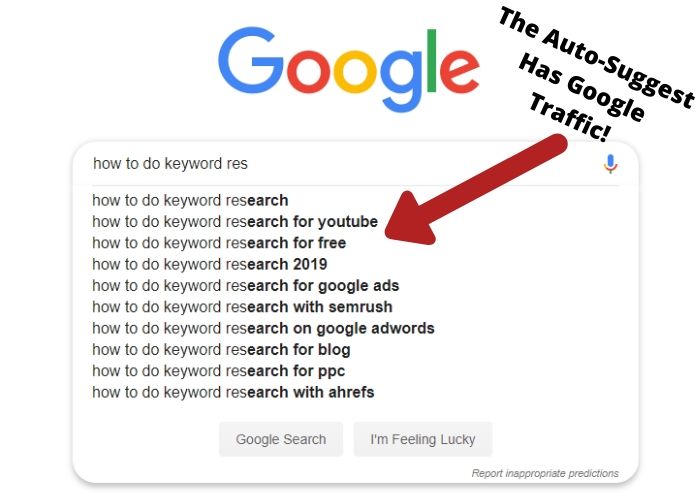

3) Use Google Autosuggest and YouTube to Validate Long Tail Search Phrases

Google only auto-suggests phrases that get traffic. At least in Chrome’s incognito mode if you didn’t enter the phrase yourself. You won’t be able to tell if they’re getting dozens or hundreds of searches. But, you know somebody is searching for it.

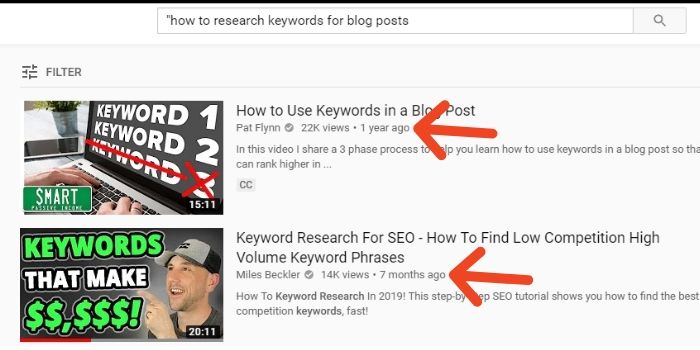

From there, I like to take my Keyword ideas and validate them with YouTube. YouTube is helpful in that it exposes the view count of videos. Be confident that your keyword phrase gets some traffic if it’s getting traffic on YouTube.

And that’s really it. Check out my blog post on how to do keyword research as a new blogger for more in-depth keyword research tips.